Einfache Algorithmen

Auf dieser Seite werden ein paar einfach Computer Vision Algorithmen gezeigt und erklärt.

Background Subtraction

Wollen wir beispielsweise Bewegung auf einem Bild erkennen, so ist es hilfreich eine Art “Green-Screen” zu haben, welcher dann nur die Bereiche zeichnet, welche sich verändern. Dies erreichen wir mit einem sog. Background-Removal oder Background-Substraction. Dazu vergleichen wir ein statisches Referenzbild mit einem Live-Bewegtbild. Wenn die Differenz zwischen beiden Bildern (und damit die Differenz der Farbwerte an der Selben Stelle) grösser ist als ein bestimmter Schwellenwert, können wir davon ausgehen, dass an diesem Punkt Bewegung stattfindet. Leider muss man als Abstrich sagen, dass die automatische Helligkeitskorrektur der iSight Kamera sich schlecht für diese Methode auswirkt.

import processing.video.*;

Capture video;

PImage backgroundImage;

float threshold = 20;

void setup() {

size(640, 480);

// start video captire

video = new Capture(this, width, height, 30);

video.start();

// prepare image to save background

backgroundImage = createImage(video.width, video.height, RGB);

}

void draw() {

// read camera image if available

if (video.available()) {

video.read();

}

// active pixel manipulation of canvas

loadPixels();

// get pixel data from video and background image

video.loadPixels();

backgroundImage.loadPixels();

// loop through video pixel by pixel

for (int x=0; x < video.width; x++) {

for (int y=0; y < video.height; y++) {

// get pixel array location

int loc = x + y * video.width;

// get foreground color (video)

color fgColor = video.pixels[loc];

// get background color (image)

color bgColor = backgroundImage.pixels[loc];

// get individual colors

float r1 = red(fgColor);

float g1 = green(fgColor);

float b1 = blue(fgColor);

float r2 = red(bgColor);

float g2 = green(bgColor);

float b2 = blue(bgColor);

// calculate spacial distance between the two colors

float dist = dist(r1, g1, b1, r2, g2, b2);

// check if distance is above threshold

if (dist > threshold) {

// write foreground pixel

pixels[loc] = fgColor;

} else {

// set pixel to black

pixels[loc] = color(0);

}

}

}

// write pixel back to canvas

updatePixels();

}

void mousePressed() {

// copy current video frame into background image

backgroundImage.copy(video, 0, 0, video.width, video.height, 0, 0, video.width, video.height);

backgroundImage.updatePixels();

}

Hellster Punkt

Für die direkte Steuerung eines Interfaces kann es hilfreich sein, zu wissen, wo sich der hellste Punkt in einem Bild befindet. Dazu wird ein PVector erstellt und eine Variable, welche den jeweils hellsten Wert für das aktuelle Frame beinhaltet. Durch das Vergleichen der Helligkeitswerte im ganzen Frame kann sehr schnell der Hellste Punkt bestimmt werden.

import processing.video.*;

Capture video;

void setup() {

size(640, 480);

// start video capture

video = new Capture(this, width, height, 30);

video.start();

}

void draw() {

// read new video frame if available

if (video.available()) {

video.read();

}

// initially set brightness to zero

float brightness = 0;

// initially set point to center

PVector point = new PVector(width/2, height/2);

// go through video pisel by pixel

for (int x=0; x < width; x++) {

for (int y=0; y < height; y++) {

// get pixel location

int loc = x + y * width;

// get color of pixel

color c = video.pixels[loc];

// check if brightness is higher than current value

if (brightness(c) > brightness) {

// set new brightness

brightness = brightness(c);

// save location of brighter point

point.x = x;

point.y = y;

}

}

}

// draw video

image(video, 0, 0);

// draw circle

ellipse(point.x, point.y, 20, 20);

}

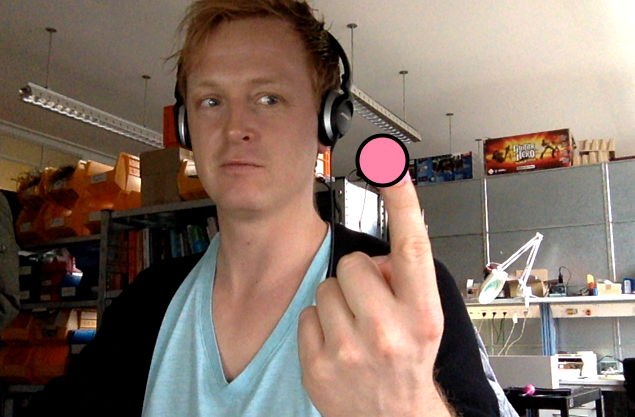

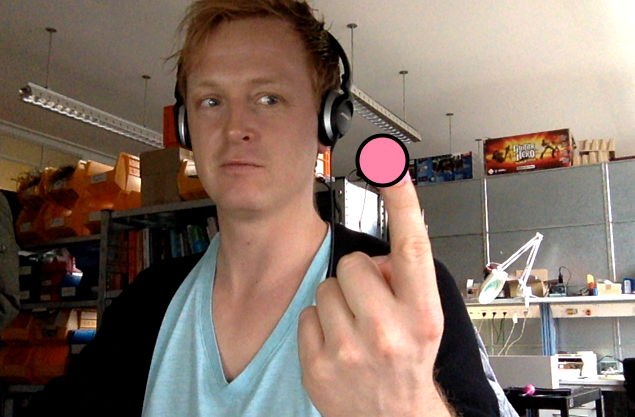

Farbtracking

Das Farbtracking ist eine sehr einfach Method um ein farbiges Objekt in einem Bild zu finden. Dazu wird einfach der Punkt im Bild gesucht der der festgelegtern Farbe am ähnlichsten ist.

import processing.video.*;

Capture video;

color trackColor;

void setup() {

size(640, 480);

// start video capture

video = new Capture(this, width, height, 15);

video.start();

// initialize track color to red

trackColor = color(255, 0, 0);

}

void draw() {

// read video frame if available

if (video.available()) {

video.read();

}

// load pixels

video.loadPixels();

// draw video

image(video, 0, 0);

// initialize record to number greater than the diagonal of the screen

float record = width+height;

// initialize variable to store closest point

PVector closestPoint = new PVector();

// get track color as vector

PVector trackColorVec = new PVector(red(trackColor), green(trackColor), blue(trackColor));

// go through image pixel by pixel

for (int x=0; x < video.width; x++) {

for (int y=0; y < video.height; y++) {

// get pixel location

int loc = x + y * video.width;

// get pixel color

color currentColor = video.pixels[loc];

// get current color as vector

PVector currColorVec = new PVector(red(currentColor), green(currentColor), blue(currentColor));

// calculate distance between current color and track color

float dist = currColorVec.dist(trackColorVec);

// save point if closer than previous

if (dist < record) {

record = dist;

closestPoint.x = x;

closestPoint.y = y;

}

}

}

// draw point if we found a one that is less than 10 apart

if (record < 10) {

fill(trackColor);

strokeWeight(4.0);

stroke(0);

ellipse(closestPoint.x, closestPoint.y, 50, 50);

}

}

void mousePressed() {

// save color of current pixel under the mouse

int loc = mouseX + mouseY * video.width;

trackColor = video.pixels[loc];

}

Blob Detection

Die Blob Detection is schon ein komplexere Art von Algorithm, wo ein gesamtes Objekt (Blop) zu erkennen versucht wird.

import processing.video.*;

Capture video;

// the color to track

color trackColor;

// a dimensional array to store marked pixels

boolean marks[][];

// the total marked pixels

int total = 0;

// the most top left pixel

PVector topLeft;

// the most bottom right pixel

PVector bottomRight;

void setup() {

size(640, 480);

// start video capture

video = new Capture(this, width, height, 15);

video.start();

// set initial track color to red

trackColor = color(255, 0, 0);

// initialize marks array

marks = new boolean[width][height];

}

void draw() {

// read video frame if available

if (video.available()) {

video.read();

}

// draw video image

image(video, 0, 0);

// find track color with treshold

findBlob(20);

// load canvas pixels

loadPixels();

// draw blob

for (int x = 0; x < width; x ++ ) {

for (int y = 0; y < height; y ++ ) {

// get pixel location

int loc = x + y*width;

// make pixel red if marked

if (marks[x][y]) {

pixels[loc] = color(255, 0, 0);

}

}

}

// set canvas pixels

updatePixels();

// draw bounding box

stroke(255, 0, 0);

noFill();

rect(topLeft.x, topLeft.y, bottomRight.x-topLeft.x, bottomRight.y-topLeft.y);

}

void mousePressed() {

// save current pixel under mouse as track color

int loc = mouseX + mouseY*video.width;

trackColor = video.pixels[loc];

}

void findBlob(int threshold) {

// reset total

total = 0;

// prepare point trackers

int lowestX = width;

int lowestY = height;

int highestX = 0;

int highestY = 0;

// prepare track color vector

PVector trackColorVec = new PVector(red(trackColor), green(trackColor), blue(trackColor));

// go through image pixel by pixel

for (int x = 0; x < width; x ++ ) {

for (int y = 0; y < height; y ++ ) {

// get pixel location

int loc = x + y*width;

// get color of pixel

color currentColor = video.pixels[loc];

// get vector of pixel color

PVector currColorVec = new PVector(red(currentColor), green(currentColor), blue(currentColor));

// get distance to track color

float dist = currColorVec.dist(trackColorVec);

// reset mark

marks[x][y] = false;

// check if distance is below threshold

if (dist < threshold) {

// mark pixel

marks[x][y] = true;

total++;

// update point trackers

if (x < lowestX) lowestX = x;

if (x > highestX) highestX = x;

if (y < lowestY) lowestY = y;

if (y > highestY) highestY = y;

}

}

}

// save locations

topLeft = new PVector(lowestX, lowestY);

bottomRight = new PVector(highestX, highestY);

}